platform

This platform helps businesses build and manage teams of AI agents that work together to solve complex problems. It addresses the challenge of automating tasks that typically require human effort, like resolving software issues or managing customer inquiries. Software engineering teams, researchers, and anyone needing to orchestrate multiple AI tools would find this platform valuable. It allows users to visually design how these agents interact, track their progress, and integrate them with different AI models. What sets it apart is its ability to achieve a high level of automation, demonstrated by its impressive results in resolving software issues, and its comprehensive setup including all the necessary supporting infrastructure.

README

> [!NOTE]

> Quick setup: use the Bootstrap repo to run prebuilt Platform Server and UI images locally — https://github.com/agynio/bootstrap

# Agyn Platform

A multi-service agents platform with a NestJS API, React UI, and filesystem-backed graph orchestration.

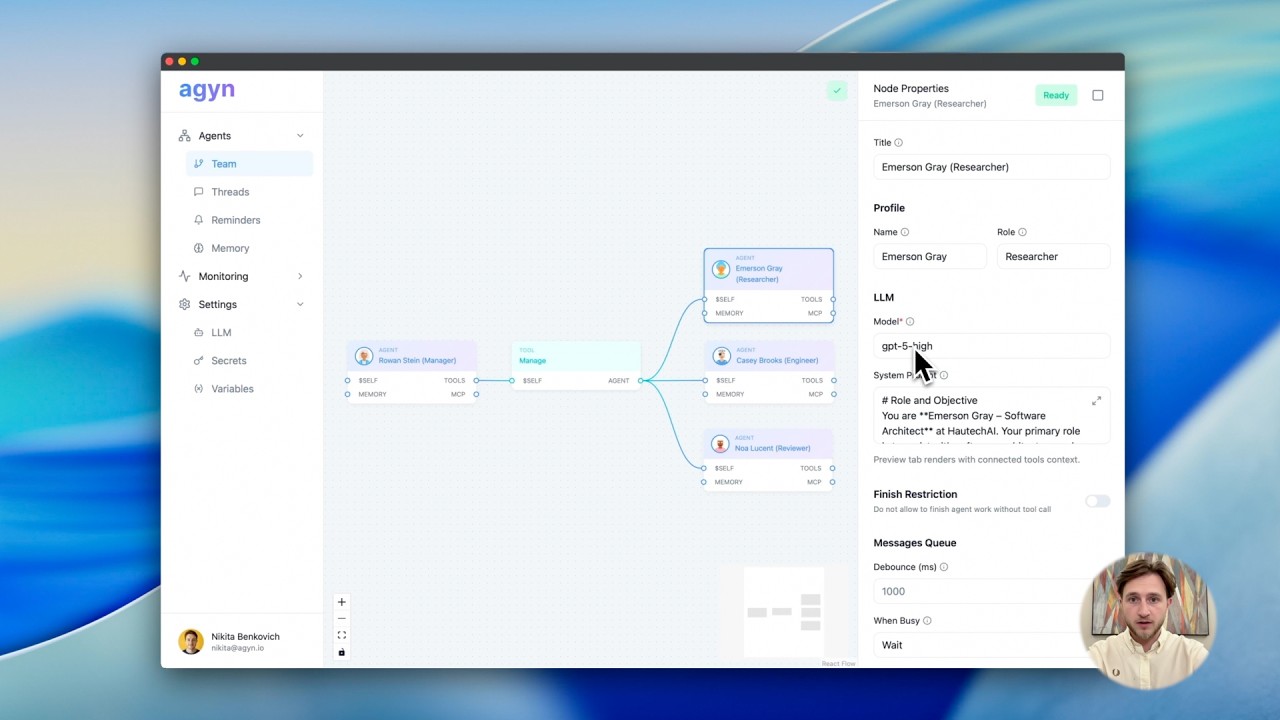

# Product Demo

[](https://www.youtube.com/watch?v=i4vZQ9vRvfY)

## Results on SWE-bench Verified

Using a coordinated multi-agent team, agyn achieved **72.2% fully automated issue resolution** on SWE-bench Verified, the highest result among GPT-5–based systems.

Full paper: https://arxiv.org/pdf/2602.01465

## Overview

Agyn Platform is a TypeScript monorepo that provides:

- A Fastify/NestJS server exposing HTTP and Socket.IO APIs for agent graphs, runs, context items, and persistence.

- A Vite/React frontend for building and operating agent graphs visually.

- Local operational components via Docker Compose: Postgres databases, LiteLLM, Vault, a Nix cache proxy (NCPS), and observability (Prometheus, Grafana, cAdvisor).

Intended use cases:

- Building agent graphs (nodes/edges) and storing them in a filesystem-backed graph dataset.

- Running, monitoring, and persisting agent interactions and tool executions.

- Integrating with LLM providers (LiteLLM or OpenAI) while tracking context/history.

- Operating a local development environment with supporting infra.

## Repository Structure

- docker-compose.yml — Development infra: Postgres, agents-db, Vault (+ auto-init), NCPS, LiteLLM, Redis, notifications, notifications-gateway, cAdvisor, Prometheus, Grafana.

- .github/workflows/

- ci.yml — Linting, tests (server/UI), Storybook build + smoke, type-check build steps.

- docker-ghcr.yml — Build and publish platform-server and platform-ui images to GHCR.

- packages/

- platform-server/ — NestJS Fastify API and Socket.IO server

- src/ — Application modules (bootstrap, graph, nodes, llm, infra, etc.)

- prisma/ — Prisma schema and migrations (Postgres), uses AGENTS_DATABASE_URL

- .env.example — Server env variables

- Dockerfile — Multi-stage build; runs server with tsx

- platform-ui/ — React + Vite SPA

- src/ — UI source

- .env.example — UI env variables (VITE_API_BASE_URL, etc.)

- Dockerfile — Builds static assets; serves via nginx with API upstream templating

- docker/entrypoint.sh, docker/nginx.conf.template — Runtime nginx config

- llm/ — Internal library for LLM interactions (OpenAI client, zod).

- shared/ — Shared types/helpers for UI/Server.

- json-schema-to-zod/ — Internal helper library.

- docs/ — Platform documentation

- README.md — Docs index

- api/index.md — HTTP and socket API reference

- config/, graph/, ui/, observability/, security/, etc. — Detailed technical docs

- monitoring/

- prometheus/prometheus.yml — Scrape Prometheus and cAdvisor

- grafana/provisioning/datasources/datasource.yml — Grafana Prometheus data source

- ops/

- k8s/ncps/ — Example Service + ServiceMonitor manifests for NCPS

- vault/auto-init.sh — Dev-only Vault initialization and diagnostics script

- package.json — Workspace-level scripts and dependencies

- pnpm-workspace.yaml — Workspace globs

- vitest.config.ts — Root vitest configuration (packages/*)

- LICENSE — Apache 2.0 with Commons Clause + No-Hosting rider

- .prettierrc, eslint configs — Formatting/linting configurations

## Tech Stack

- Languages: TypeScript

- Backend:

- NestJS 11 (@nestjs/common@^11.1), Fastify 5 (@nestjs/platform-fastify, fastify@^5.6.1)

- Socket.IO 4.8 for server/client events

- Prisma 6 (schema + migrations) with Postgres databases

- Frontend:

- React 19, Vite 7, Tailwind CSS 4.1, Radix UI

- Storybook 10 for component documentation

- LLM:

- LiteLLM server (ghcr.io/berriai/litellm) or OpenAI (@langchain/* tooling)

- Tooling:

- pnpm 10.5 (corepack-enabled), Node.js 20

- Vitest 3 for testing; ESLint; Prettier

- Observability:

- Prometheus, Grafana, cAdvisor

- Containers:

- Docker Compose services (see docker-compose.yml)

Required versions:

- Node.js 20 (see .github/workflows/ci.yml and Dockerfiles)

- pnpm 10.5.0 (package.json, Dockerfiles)

- Postgres 16 (docker-compose.yml)

## Quick Start

### Prerequisites

- Node.js 20+

- pnpm 10.5 (corepack enable; corepack prepare pnpm@10.5.0)

- Docker Engine + Docker Compose plugin

- Git

Optional local services (provided in docker-compose.yml for dev):

- Postgres databases (postgres at 5442, agents-db at 5443)

- LiteLLM + Postgres (loopback port 4000)

- Vault (8200) with dev auto-init

- NCPS (Nix cache proxy) on 8501

- Prometheus (9090), Grafana (3000), cAdvisor (8080)

### Setup

1) Clone and install:

```bash

gh repo clone agynio/platform

cd platform

pnpm install

```

2) Configure environments:

- Server: copy packages/platform-server/.env.example to .env, then set:

- AGENTS_DATABASE_URL (required) — e.g. postgresql://agents:agents@localhost:5443/agents

- LLM_PROVIDER (optional) — defaults to `litellm`; set to `openai` to use direct OpenAI. Other values are rejected.

- LITELLM_BASE_URL, LITELLM_MASTER_KEY (required for LiteLLM path)

- Optional LiteLLM tuning: LITELLM_KEY_ALIAS (default `agents/<env>/<deployment>`), LITELLM_KEY_DURATION (`30d`), LITELLM_MODELS (`all-team-models`)

- Optional: CORS_ORIGINS, VAULT_* (see packages/platform-server/src/core/services/config.service.ts and .env.example)

- UI: copy packages/platform-ui/.env.example to .env and set:

- VITE_API_BASE_URL — e.g. http://localhost:3010

- Optional: VITE_UI_MOCK_SIDEBAR (shows mock templates locally)

3) Start dev supporting services:

```bash

docker compose up -d

# Starts postgres (5442), agents-db (5443), vault (8200), ncps (8501),

# litellm (127.0.0.1:4000), redis (6379), notifications (9090),

# notifications-gateway (3011), docker-runner (50051)

# Optional monitoring (prometheus/grafana) lives in docker-compose.monitoring.yml.

# Enable with: docker compose -f docker-compose.yml -f docker-compose.monitoring.yml up -d

```

4) Apply server migrations and generate Prisma client:

```bash

# set your AGENTS_DATABASE_URL appropriately

export AGENTS_DATABASE_URL=postgresql://agents:agents@localhost:5443/agents

pnpm --filter @agyn/platform-server exec prisma migrate deploy

pnpm --filter @agyn/platform-server run prisma:generate

```

### Run

- Development:

```bash

# Backend

pnpm --filter @agyn/platform-server dev

# UI (Vite dev server)

pnpm --filter @agyn/platform-ui dev

# docker-runner (gRPC server) runs as an external service

# (see the docker-runner repo or your deployment stack)

```

Server listens on PORT (default 3010; see packages/platform-server/src/index.ts and Dockerfile), UI dev server on default Vite port.

- Production (Docker):

- Use published images from GHCR (see .github/workflows/docker-ghcr.yml):

- ghcr.io/agynio/platform-server

- ghcr.io/agynio/platform-ui

- Example: server (env must include AGENTS_DATABASE_URL, LITELLM_BASE_URL, LITELLM_MASTER_KEY; optionally set LLM_PROVIDER):

```bash

docker run --rm -p 3010:3010 \

-e AGENTS_DATABASE_URL=postgresql://agents:agents@host.docker.internal:5443/agents \

-e LLM_PROVIDER=litellm \

-e LITELLM_BASE_URL=http://host.docker.internal:4000 \

-e LITELLM_MASTER_KEY=sk-dev-master-1234 \

ghcr.io/agynio/platform-server:latest

```

- Example: UI (configure API upstream via API_UPSTREAM):

```bash

docker run --rm -p 8080:80 \

-e API_UPSTREAM=http://host.docker.internal:3010 \

ghcr.io/agynio/platform-ui:latest

```

## Configuration

Key environment variables (server) from packages/platform-server/.env.example and src/core/services/config.service.ts:

- Required:

- AGENTS_DATABASE_URL — Postgres connection for platform-server

- LLM_PROVIDER (optional) — defaults to `litellm`; set to `openai` to use direct OpenAI. Other values are rejected.

- LITELLM_BASE_URL — LiteLLM root URL (must not include /v1; default host in docker-compose is 127.0.0.1:4000)

- LITELLM_MASTER_KEY — admin key for LiteLLM

- Optional LLM

[truncated…]PUBLIC HISTORY

IDENTITY

Identity inferred from code signals. No PROVENANCE.yml found.

Is this yours? Claim it →METADATA

README BADGE

Add to your README: